Understanding Data Lakes in Data Analysis

Data Lake Briefly Summarized

- A data lake is a centralized repository designed to store a vast amount of data in its native, raw format.

- It can handle structured, semi-structured, and unstructured data, including everything from relational databases to images and videos.

- Data lakes support various tasks such as reporting, visualization, advanced analytics, and machine learning.

- They can be implemented on-premises or in the cloud with services from Amazon, Microsoft, Google, and others.

- Unlike hierarchical data warehouses, data lakes retain the flexibility to store data without predefined schemas, allowing for more agile exploration and analysis.

The term "Data Lake" has become a buzzword in the world of big data and analytics. But what exactly is a data lake, and why has it become so crucial for organizations dealing with massive volumes of data? This article will dive into the concept of data lakes, their advantages, how they compare to traditional data warehouses, and their role in data analysis.

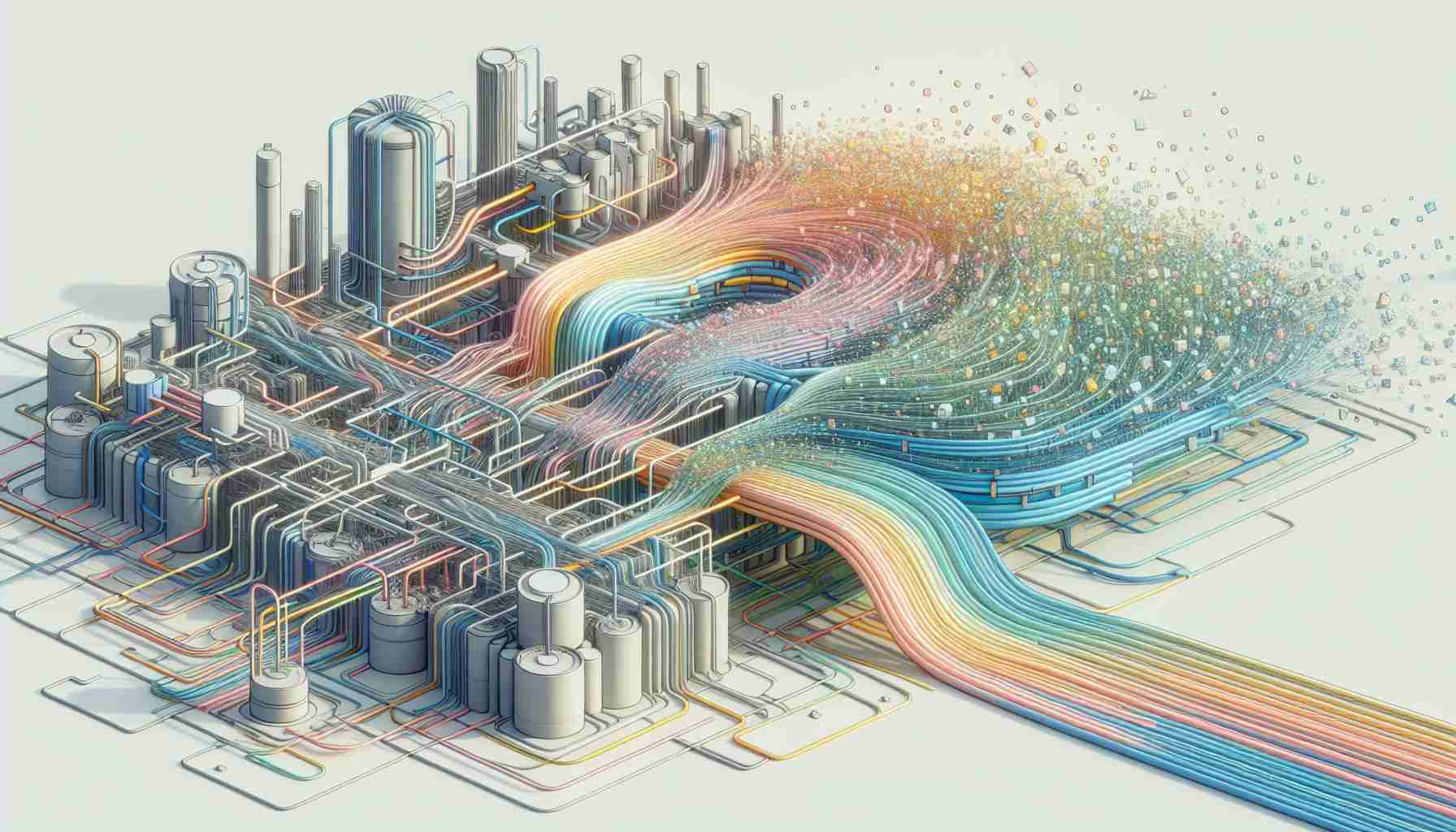

Introduction to Data Lakes

In the simplest terms, a data lake is a vast pool of raw data. The purpose of a data lake is to store large amounts of data in its native format until it is needed. This includes structured data from traditional databases, semi-structured data like CSV files and logs, and unstructured data such as emails, documents, and multimedia content.

The key characteristic of a data lake is its ability to store data at any scale and in various formats without the need to convert or structure the data beforehand. This flexibility makes data lakes an attractive option for organizations that want to leverage their data for insights without being constrained by the rigid architecture of traditional data warehouses.

The Architecture of a Data Lake

A data lake typically has a flat architecture. Data is stored in object blobs or files that can be spread across multiple servers or clusters. This architecture allows for high scalability and accessibility, as data can be added from various sources without the need for extensive preprocessing.

Metadata and indexing are crucial components of a data lake. They enable users to locate and retrieve data efficiently. Without proper metadata and indexing strategies, a data lake can quickly become a "data swamp," where the sheer volume of data makes it difficult to find and use valuable information.

Data Lake vs. Data Warehouse

It's important to understand the difference between a data lake and a data warehouse. A data warehouse is a repository for structured, filtered data that has already been processed for a specific purpose. The structure of a data warehouse is defined by schemas that are applied to the data before it is written to the database.

In contrast, a data lake is schema-less until read, meaning the data is kept in an unstructured format and a schema is applied only when the data is read for analysis. This approach provides more flexibility but requires more robust data management and governance to prevent data from becoming unusable.

Advantages of Data Lakes

- Scalability: Data lakes are designed to scale out, handling more data and more users without performance degradation.

- Flexibility: They can store any type of data, from any source, in its native format, allowing for a wide range of analytical possibilities.

- Cost-effectiveness: Especially when implemented in the cloud, data lakes can be more cost-effective than traditional data warehouses due to their ability to scale and the reduced need for data transformation.

- Advanced analytics: Data lakes support deep learning, machine learning, and predictive analytics, providing valuable insights that can drive business decisions.

Implementing a Data Lake

When implementing a data lake, organizations must consider several factors:

- Data Governance: Effective governance policies are essential to ensure data quality and accessibility.

- Security: Robust security measures must be in place to protect sensitive data.

- Integration: The data lake should integrate seamlessly with existing systems and tools for analysis and reporting.

- Management: Continuous monitoring and management are required to maintain the data lake's performance and prevent it from becoming a data swamp.

Conclusion

Data lakes have emerged as a powerful tool for organizations looking to capitalize on their data. By providing a flexible, scalable, and cost-effective solution for storing and analyzing diverse datasets, data lakes enable businesses to uncover insights that can lead to better decision-making and competitive advantages.

However, the success of a data lake implementation depends on careful planning, robust governance, and effective management. As the volume and variety of data continue to grow, data lakes will likely become an even more integral part of the data management and analytics landscape.

FAQs on Data Lakes

What is a data lake? A data lake is a centralized repository that can store large volumes of structured and unstructured data in its raw format.

How does a data lake differ from a data warehouse? A data warehouse stores structured data that has been processed for a specific purpose, while a data lake stores raw data in its native format for on-demand processing and analysis.

What are the benefits of using a data lake? Data lakes offer scalability, flexibility, cost-effectiveness, and support for advanced analytics, making them suitable for businesses that require agile data exploration and analysis.

Can data lakes only be implemented in the cloud? No, data lakes can be implemented both on-premises and in the cloud. Cloud-based data lakes are popular due to their scalability and cost-efficiency.

What is a data swamp? A data swamp is a derogatory term for a data lake that has become unmanageable and unusable due to poor data governance and lack of proper management.

Sources

- Data lake

- Data lake - Wikipedia

- What is a Data Lake? - Introduction to Data Lakes and Analytics - AWS

- Introduction to Data Lakes - Databricks

- What is a Data Lake? Data Lake vs. Warehouse | Microsoft Azure

- What is a Data Lake? | Snowflake Guides

- Data Lake | Microsoft Azure

- What is a Data Lake? - Qlik

- What Is a Data Lake? | Definition from TechTarget

- Data Lake | Implementations | AWS Solutions - Amazon